Face Verification Library

Introduction

The Face Verification Library is a portable C++ library for face verification, designed for real-time processing on client devices (mobile and desktop).

The SDK provides wrappers in the following languages:

- C

- Python

- C# (.NET)

Features

- Face detection

- Face embedding extraction for verification

- Embedding comparison with similarity scoring

- Async API with both

std::futureand callback interfaces - Configurable concurrency

- Support for 3rd party face detectors

- Cross-platform (desktop, mobile)

Supported Platforms

Hardware Requirements

- CPU: Any modern 64-bit capable CPU (x86-64 with AVX, ARM8)

- GPU: No special requirement

- RAM: 2 GB of available RAM required

- Camera: Minimum resolution: 640x480

Software Requirements

The SDK is regularly tested on the following operating systems:

Platform / Architecture Support

Available APIs

- C++ - Core native API

- C - C-compatible API for FFI integration

- Python - Python bindings via pybind11

- .NET - C# bindings with async/await support

API Comparison

Create verifier

- C++:

FaceVerifier() - C:

fvl_face_verifier_new() - Python:

FaceVerifier() - .NET:

FaceVerifier()

Detect faces

- C++:

detectFaces() - C:

fvl_face_verifier_detect_faces() - Python:

detect_faces() - .NET:

DetectFacesAsync()

Embed face

- C++:

embedFace() - C:

fvl_face_verifier_embed_face() - Python:

embed_face() - .NET:

EmbedFaceAsync()

Compare faces

- C++:

compareFaces() - C:

fvl_face_verifier_compare_faces() - Python:

compare_faces() - .NET:

CompareFaces()

Get model name

- C++:

getModelName() - C:

fvl_face_verifier_get_model_name() - Python:

get_model_name() - .NET:

ModelName

Get SDK version

- C++:

getSDKVersion() - C:

fvl_face_verifier_get_sdk_version() - Python:

get_sdk_version_string() - .NET:

SdkVersion

Quick Links

Getting Started

API Reference

Quick Examples

C++

#include "faceverifier.h"

#include <opencv2/core.hpp>

#include <opencv2/imgcodec.hpp>

#include <iostream>

int main()

{

fvl::FaceVerifier verifier("model/model.realZ");

cv::Mat image1 = cv::imread("image1.jpg");

cv::Mat image2 = cv::imread("image2.jpg");

std::vector<fvl::Face> faces1 = verifier.detectFaces({image1.ptr(), image1.cols, image1.rows, static_cast<int>(image1.step1()), fvl::ImageFormat::BGR}).get();

std::vector<fvl::Face> faces2 = verifier.detectFaces({image2.ptr(), image2.cols, image2.rows, static_cast<int>(image2.step1()), fvl::ImageFormat::BGR}).get();

std::vector<std::vector<float>> embeddings1, embeddings2;

for (const Face& face: faces1)

embeddings1.push_back(verifier.embedFace(face).get());

for (const Face& face: faces2)

embeddings2.push_back(verifier.embedFace(face).get());

for (size_t i = 0; i < embeddings1.size(); ++i)

for (size_t j = 0; j < embeddings2.size(); ++j)

if (verifier.compare_faces(embeddings1[i], embeddings2[j]).similarity > 0.3) {

fvl::BoundingBox bbox1 = faces1[i].bounding_box();

fvl::BoundingBox bbox2 = faces2[j].bounding_box();

std::cout << "(" << bbox1.x << ", " << bbox1.y << ", " << bbox1.width << ", " << bbox1.height << ") from image 1 and";

std::cout << "(" << bbox2.x << ", " << bbox2.y << ", " << bbox2.width << ", " << bbox2.height << ") from image 2 are the same people";

std::cout << std::endl;

}

return 0;

}Python

import realeyes.face_verification as fvl

import cv2

verifier = fvl.FaceVerifier('model/model.realZ')

image1 = cv2.imread('image1.jpg')[:, :, ::-1] # opencv reads BGR we need RGB

image2 = cv2.imread('image2.jpg')[:, :, ::-1] # opencv reads BGR we need RGB

faces1 = verifier.detect_faces(image1)

faces2 = verifier.detect_faces(image2)

embeddings1 = [verifier.embed_face(face) for face in faces1]

embeddings2 = [verifier.embed_face(face) for face in faces2]

for i, e1 in enumerate(embeddings1):

for j, e2 in enumerate(embeddings2):

if verifier.compare_faces(e1, e2).similarity > 0.3:

print(f'{faces1[i]} from image 1 and {faces2[j]} from image 2 are the same person!')C#

using Realeyes.FaceVerification;

using OpenCvSharp;

using var verifier = new FaceVerifier("model/model.realZ");

using var image1 = Cv2.ImRead("image1.jpg");

using var image2 = Cv2.ImRead("image2.jpg");

// Convert OpenCV Mat to byte array

var imageData1 = image1.ToBytes();

var imageData2 = image2.ToBytes();

var header1 = new ImageHeader(imageData1, image1.Width, image1.Height,

image1.Width * image1.Channels(), ImageFormat.BGR);

var header2 = new ImageHeader(imageData2, image2.Width, image2.Height,

image2.Width * image2.Channels(), ImageFormat.BGR);

// Detect faces in parallel

var detectTask1 = verifier.DetectFacesAsync(header1);

var detectTask2 = verifier.DetectFacesAsync(header2);

using var faces1 = await detectTask1;

using var faces2 = await detectTask2;

// Embed all faces in parallel

var embeddingTasks = new List<Task<float[]>>();

foreach (var face in faces1)

embeddingTasks.Add(verifier.EmbedFaceAsync(face));

foreach (var face in faces2)

embeddingTasks.Add(verifier.EmbedFaceAsync(face));

var allEmbeddings = await Task.WhenAll(embeddingTasks);

var embeddings1 = allEmbeddings.Take(faces1.Count).ToArray();

var embeddings2 = allEmbeddings.Skip(faces1.Count).ToArray();

// Compare embeddings

for (int i = 0; i < embeddings1.Length; i++)

{

for (int j = 0; j < embeddings2.Length; j++)

{

var match = verifier.CompareFaces(embeddings1[i], embeddings2[j]);

if (match.Similarity > 0.3f)

{

var bbox1 = faces1[i].BoundingBox;

var bbox2 = faces2[j].BoundingBox;

Console.WriteLine($"({bbox1.X}, {bbox1.Y}, {bbox1.Width}, {bbox1.Height}) from image 1 and " +

$"({bbox2.X}, {bbox2.Y}, {bbox2.Width}, {bbox2.Height}) from image 2 are the same person!");

}

}

}Results

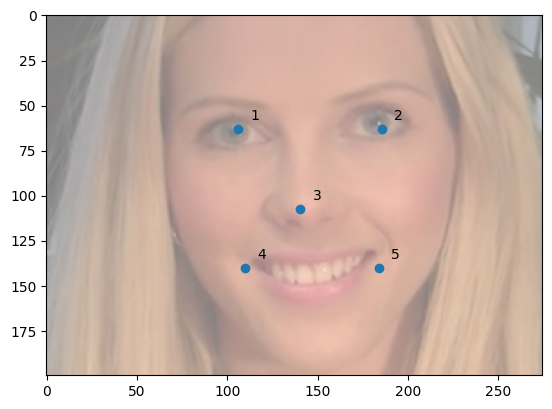

The Face objects consist of the following members:

- bounding_box: Bounding box of the detected face (left, top, width, height).

- confidence: Confidence of the detection ([0,1] — higher is better).

- landmarks: 5 landmarks from the face:

- Left eye

- Right eye

- Nose (tip)

- Left mouth corner

- Right mouth corner

3rd Party Face Detector

It is possible to calculate the embedding of a face which was detected with a different library. You can create a Face object by specifying the source image and the landmarks.

Dependencies

The public C++ API hides all the implementation details from the user, and it only depends on the C++17 Standard Library. It also provides a binary compatible interface, making it possible to change the underlying implementation without the need of recompilation of the user code.

Release Notes

-

Version 1.5.0 (15 Dec 2025)

- Improved performance on ARM CPUs

- Cleaner .NET API

- New public C API

-

Version 1.4.0 (7 Feb 2024)

- Experimental .NET support

-

Version 1.3.0 (9 Jun 2023)

- Add model config version 2 support

-

Version 1.2.0 (9 Jun 2023)

- Add support for AES encryption

-

Version 1.1.0 (3 Apr 2023)

- Add support for 3rd party face detectors

-

Version 1.0.0 (1 Mar 2023)

- Initial release

3rd Party Licenses

While the SDK is released under a proprietary license, the following open-source projects were used in it with their respective licenses:

License

Proprietary - Realeyes Data Services Ltd.